(Well, not for you, just for your business)

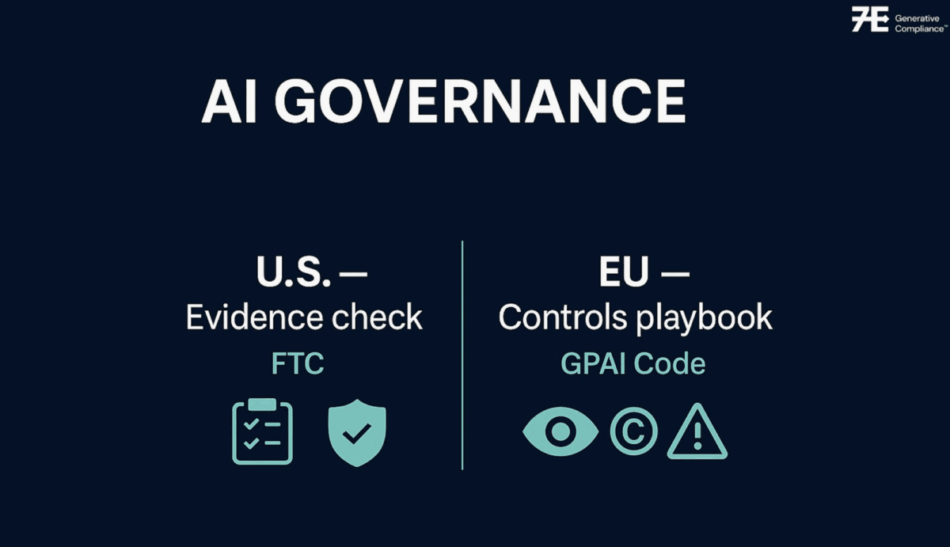

US and the EU are both signaling in the same direction.

U.S. – The FTC is getting ready to demand internal docs from OpenAI, Meta, and Character.AI on how chatbots affect kids’ mental health, think eval results, age-gating, incident logs, and retention rules. That’s an evidence check.

EU – The Commission’s GPAI Code of Practice is live, three chapters (Transparency, Copyright, Safety/Security) that translate the AI Act’s model obligations into controls you can actually run. Optional on paper, but it’s quickly becoming the default yardstick.

What top players are saying: Google says it will sign; OpenAI and Meta are currently a ‘no’.

Different risk appetites, same direction of travel: prove you know your model, your data, and your failure modes.

What does it all mean? Here is the bottom line.

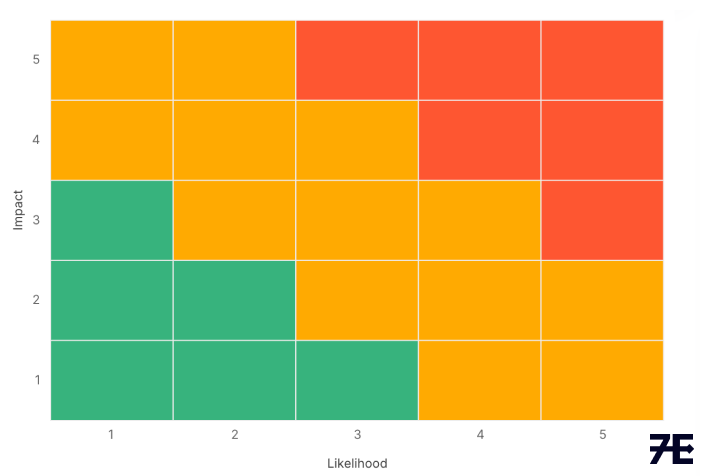

It’s the operating system that makes AI safe, lawful, and explainable by default. Policies are useless without controls + telemetry + evidence. If you can’t hand an auditor (or regulator) a clean bundle who owns the risk, what you tested, what broke, how you fixed it, and when you’ll retest you don’t have governance. You have promises.

How technology should help (not hand-wave):

- Controls as data: map each obligation (FTC/COPPA, EU AI Act/GPAI) to a concrete control with an owner, review cadence, and machine-readable status. Federal Trade Commission Digital Strategy

- Automatic evidence capture: versioned model cards, eval runs, red-team scenarios, and incident tickets auto-logged to the change you shipped.

- Risk registers that breathe: child-safety and vulnerable-user risks tied to guardrails (age assurance, content filters, escalation paths) and retention TTLs that actually delete. Federal Trade Commission

- Copyright accountability: document training data sources/filters, opt-outs, and outputs’ provenance; wire these to your release checklist per the GPAI Code. Digital Strategy

So what should I do:

- A child-safety risk register for every chat flow (age gating, fallback behavior, human-in-the-loop), with pre-release evals stored and searchable. Reuters

- A GPAI Code mapping: transparency doc (model card + data lineage), copyright safeguards, systemic-risk testing, and an incident playbook controls linked to evidence. Digital Strategy Latham & Watkins

- Data-retention proof: TTLs and deletion logs for minors’ data that match policy (and the FTC’s updated COPPA rule). Federal Trade Commission

- A release gate: no model or feature ships without an eval report, red-team delta, and sign-off recorded.

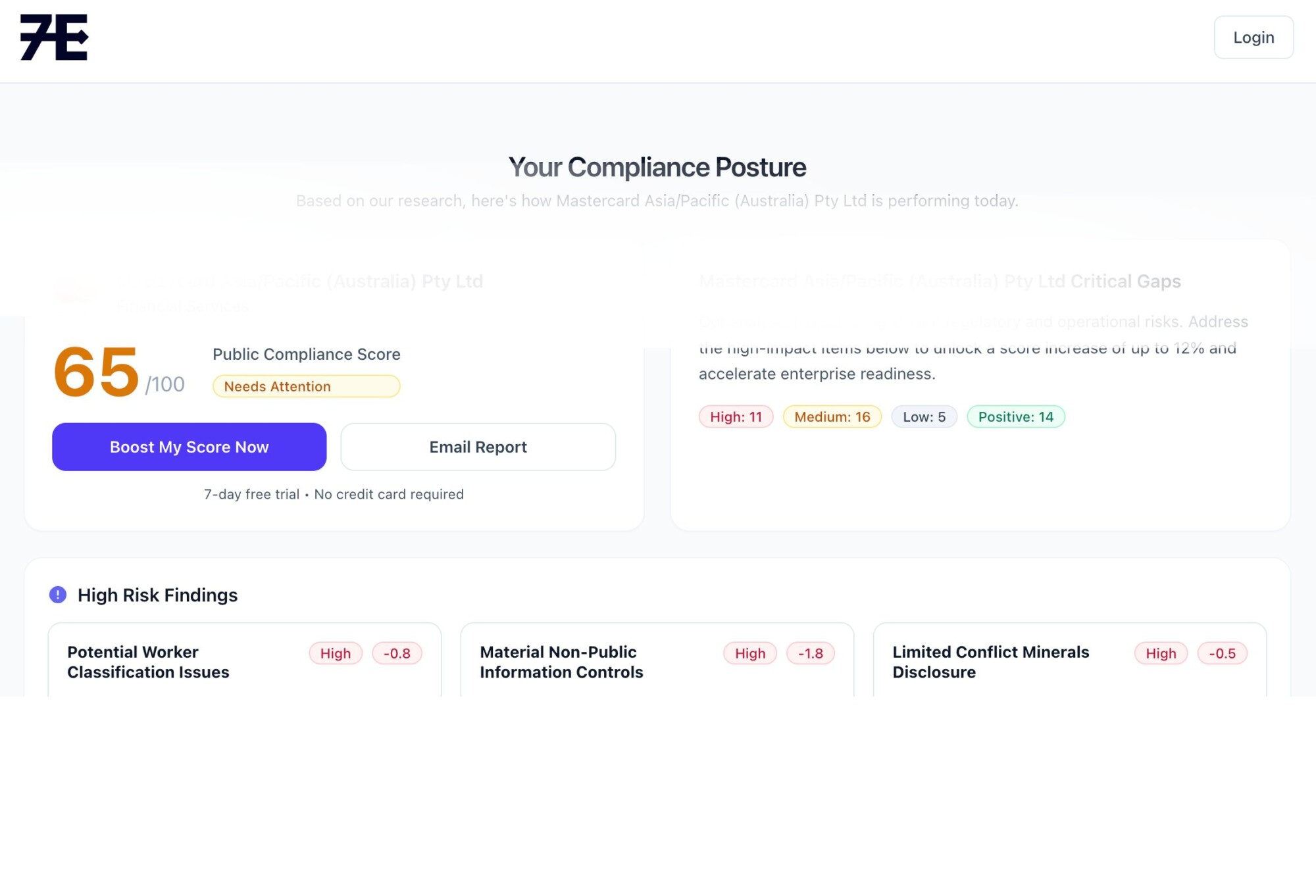

We are building the 7E AI Governance solution: turn obligations into living controls with evidence attached, so when someone says “show me,” you can without a fire drill.